Snowflake Data Scientist Certification Training in Gurgaon

Master Snowflake cloud and get prepared for Snowflake official certifications with our role based courses tailored to your specific needs

- Enroll for Snowflake Architecture, Developer, Operations, DevOps, AI / ML, and Networking Certifications

- Experience blended learning through interactive offline and online sessions.

- Job Assured Course

- Course Duration - 2 months

- Get Trained from Industry Experts

Train using realtime course materials using online portals & trainer experience to get a personalized teaching experience.

Active interaction in sessions guided by leading professionals from the industry

Gain professionals insights through leading industry experts across domains

Enhance your career with 42+ in-demand skills and 20+ services

Snowflake Data Scientist Certification Overview

This Snowflake live-learning courses cover deep into designing, developing, deploying, and managing scalable solutions and infrastructure across Snowflake platform, equipping you for success in today’s fast-evolving technology landscape

- Benefit from ongoing access to all self-paced videos and archived session recordings

- Success Aimers supports you in gaining visibility among leading employers

- Get prepared for 6+ Snowflake official certifications with our role based courses tailored to your specific needs

- Engage with real-world capstone projects

- Engage in live virtual classes led by industry experts, complemented by hands-on projects

- Job interview rehearsal sessions

Demonstrates proficiency in performing data loading and transformation, monitoring and optimizing virtual warehouse performance and concurrency, executing DDL and DML queries, and working with structured, semi-structured, and unstructured data. Additionally, skilled in utilizing cloning, Time Travel, and Fail-safe features for data management, facilitating secure data sharing across Snowflake accounts, and designing and managing Snowflake account structures

Demonstrates proficiency in outlining key data science concepts, implementing Snowflake data science best practices, and preparing data with feature engineering techniques in Snowflake. Skilled in training and deploying machine learning models, as well as leveraging GenAI and LLM capabilities within the Snowflake platform

What is Snowflake Cloud Fundamentals ?

Snowflake Certified Fundamentals are essential for building data pipelines in the field of Data Engineering . They manage the full data lifecycle— ingestion (hydration) to the curated zone & finally to gold layer (reporting layer) to bring business insights, and automating data pipelines and testing processes. By streamlining workflows and resolving challenges in data pipeline development on cloud, and maintenance, they help organizations deliver reliable, high-performance data pipelines faster and more efficiently.

The role of Snowflake Certified Data Scientists?

Snowflake Certified Data Scientists build ML applications to let computer learn automatically without need to human intervention. Machine Learning use statistics and algorithms to recognize patterns and to decision-making accordingly & uses development frameworks like TensorFlow, Scikit-Learn and others for intelligent automation that will allow organizations to boost decision making and also thrive business growth with improved customer satisfaction. ML engineers build optimized ML workflows, deployment and maintain the ML development life cycle to deliver high-precise solutions to the clients using ML frameworks/workflow builders.

Who should take this Snowflake Certified Data Scientists course?

Snowflake Data Scientists course accelerates/boost career in Data & Cloud organizations.

- ML Engineer manages the end-to-end ML deployment life cycle using MLFlow and model building templates.

- ML Engineer – Implementing MLOps Pipelines using MLFlow & ML Tools.

- Snowflake ML Developers – Automated ML deployment & workflows using MLFlow & ML Learning Tools.

- ML Architect – Leading Machine Learning & AI initiative within enterprise.

- Cloud and ML/AI Engineers – Deploying ML Application using ML automation tools including MLFlow, Kubeflow, Tensorboard & others across environments seamlessly and effectively.

What are the prerequisites of Snowflake Data Scientist Certification Course?

Snowflake Certified Data Scientists course accelerates/boost career in Data & Cloud organizations.

- ML Engineer manages the end-to-end ML deployment life cycle using MLFlow and model building templates.

- ML Engineer – Implementing MLOps Pipelines using MLFlow & ML Tools.

- ML Developers – Automated ML deployment & workflows using MLFlow & ML Learning Tools.

- ML Architect – Leading Machine Learning & AI initiative within enterprise.

- Cloud and ML/AI Engineers – Deploying ML Application using ML automation tools including MLFlow, Kubeflow, Tensorboard & others across environments seamlessly and effectively.

Kind of Job Placement/Offers after ML Engineer Certification Course?

- Prerequisites required for the Snowflake Data Scientist Certification Course.

- High School Diploma or a undergraduate degree

- Python + JSON/YAML scripting language

- IT Foundational Knowledge along with DevOps and cloud infrastructure skills

- Knowledge of Cloud Computing Platforms like AWS, AZURE and GCP will be an added advantage.

| Training Options | Weekdays (Mon-Fri) | Weekends (Sat-Sun) | Fast Track |

|---|---|---|---|

| Duration of Course | 2 months | 3 months | 15 days |

| Hours / day | 1-2 hours | 2-3 hours | 5 hours |

| Mode of Training | Offline / Online | Offline / Online | Offline / Online |

Snowflake Data Scientist Course Overview

Start your carrer in Snowflake with AI certification in Machine Learning Course course, that will help in shaping the carrer to the current industry needs that need ML automation using intelligent ML workflows like TensorFlow, Scikit Learn & others in every domain & sphere of the industry that will allow organizations to boost decision making & also thrive business growth with improved customer satisfaction.

Data Science

Snowflake SnowPro Advanced: Data Scientist Certification Exam

Getting Started with Data Engineering and ML using Snowpark for Python and Snowflake Notebooks

- Setup Environment: Use stages and tables to ingest and organize raw data from S3 into Snowflake

- Data Engineering: Leverage Snowpark for Python DataFrames in Snowflake Notebook to perform data transformations such as group by, aggregate, pivot, and join to prep the data for downstream applications

- Data Pipelines: Use Snowflake Tasks to turn your data pipeline code into operational pipelines with integrated monitoring

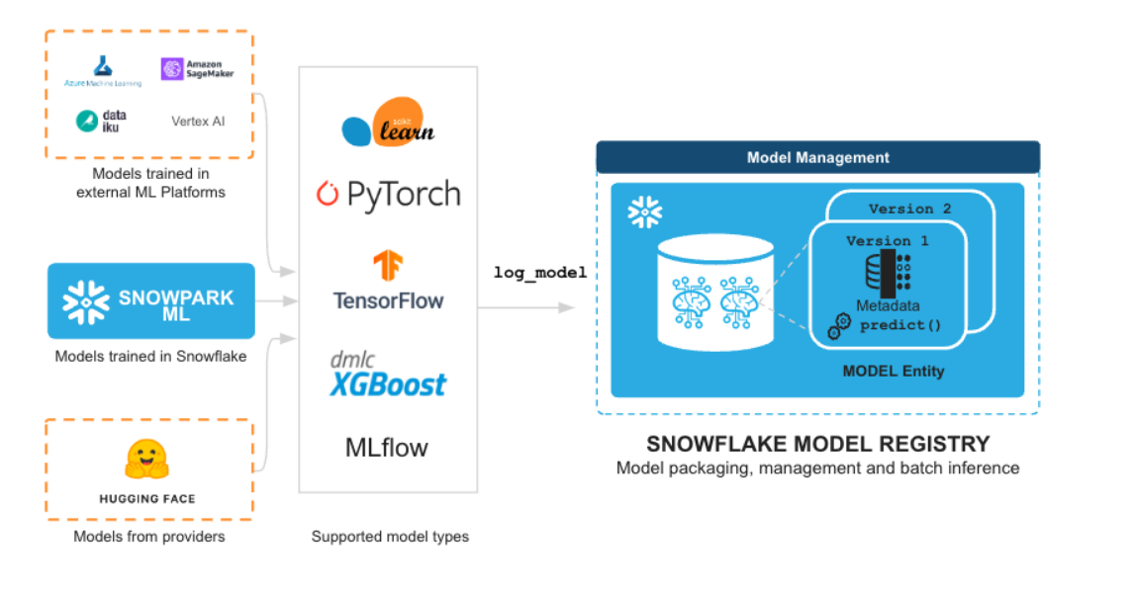

- Machine Learning: Process data and run training job in Snowflake Notebook using the Snowpark ML library, and register ML model and use it for inference from Snowflake Model Registry

- Streamlit: Build an interactive Streamlit application using Python (no web development experience required) to help visualize the ROI of different advertising spend budgets

Getting Started with Snowpark in Snowflake Python Worksheets and Notebooks

- Familiar Client Side Libraries - Snowpark brings deeply integrated, DataFrame-style programming and OSS compatible APIs to the languages data practitioners like to use. It also includes the Snowpark ML API for more efficient ML modeling (public preview) and ML operations (private preview).

- Flexible Runtime Constructs - Snowpark provides flexible runtime constructs that allow users to bring in and run custom logic. Developers can seamlessly build data pipelines, ML models, and data applications with User-Defined Functions and Stored Procedures.

Snowflake native statistical functions

- Summary of functions

- Scalar functions

- Aggregate functions

- Window functions

- Table functions

- System functions

- User-defined functions (UDFs)

Snowpark Python sessions

- Creating a Session for Snowpark Python

- Connect by using the connections.toml file

- Closing a Session

Snowpark-optimized warehouses

- When to use a Snowpark-optimized warehouse

- Configuration options for Snowpark-optimized warehouses

- Creating a Snowpark-optimized warehouse

- Modifying Snowpark-optimized warehouse properties

- Using Snowpark Python Stored Procedures to run ML training workloads

- Billing for Snowpark-optimized warehouses

- Region availability

Snowpark Python DataFrame API

- Working with DataFrames in Snowpark Python

- Setting up the Examples in a Python Worksheet

- Constructing a DataFrame

- Specifying How the Dataset Should Be Transformed

- Joining DataFrames

- Specifying Columns and Expressions

- Using Double Quotes Around Object Identifiers (Table Names, Column Names, etc.)

- Using Literals as Column Objects

- Casting a Column Object to a Specific Type

- Chaining Method Calls

- Retrieving Column Definitions

- Performing an Action to Evaluate a DataFrame

- Saving Data to a Table

- Creating a View From a DataFrame

- Working With Files in a Stage

- Working with Semi-Structured Data

- Flattening an Array of Objects into Rows

- Submit Snowpark queries concurrently

- Return the Contents of a DataFrame as a Pandas DataFrame

- Snowpark DataFrames vs Snowpark pandas DataFrame: Which should I choose?

Snowpark Python user defined functions (UDFs)

- Creating User-Defined Functions (UDFs) for DataFrames in Python

- Specifying Dependencies for a UDF

- Using Artifact Repository packages in a UDF

- Creating an Anonymous UDF

- Creating and Registering a Named UDF

- Creating a UDF from a Python source file

- Reading Files with a UDF

- Reading Dynamically-Specified Files with SnowflakeFile

- Writing files from Snowpark Python UDFs and UDTFs

- Using Vectorized UDFs

Snowpark Python user defined table functions (UDTFs)

- Creating User-Defined Table Functions (UDTFs) for DataFrames in Python

- Implementing a UDTF Handler

- Registering a UDTF

- Defining a UDTF’s Input Types and Output Schema

Snowpark Python stored procedures

- Creating Stored Procedures for DataFrames in Python

- Using Artifact Repository packages in a Stored Procedure

- Using Third-Party Packages from Anaconda in a Stored Procedure

- Creating an Anonymous Stored Procedure

- Reading Files with a Stored Procedure

A Image Recognition App in Snowflake using Snowpark Python, PyTorch, Streamlit and OpenAI

- How to work with Snowpark for Python APIs

- How to use pre-trained models for image recognition using PyTorch in Snowpark

- How to create Snowpark Python UDF and deploy it in Snowflake

- How to call Snowpark for Python UDF in Streamlit

- How to run Streamlit applications

Understanding data science concepts and machine learning lifecycle

- Data preparation, cleaning, and feature engineering

- Training, validating, and interpreting machine learning models

- Model lifecycle management and visualization for business cases

- Outline data science concepts

- Implement Snowflake data science best practices

- Prepare data and use feature engineering in Snowflake

- Train and use machine learning models

- Use GenAI and LLM capabilities in Snowflake

Snowpark and Gen AI: Learn about Snowpark and Snowflake's Gen AI capabilities, including Cortex features

- Heavy focus on Cortex features like Analyst, Agents, Search, and fine-tuning, plus Snowflake Copilot integration

Document AI: Understand how to set up, preprocess, and extract data from documents using Snowflake

- Setup, pre-processing docs (invoices/contracts), extraction with PARSE_DOCUMENT

Build & Develop Analytical Dashboards & build Analytical ML Models from Snowflake Data Pipeline to Visualize Dashboards after Data Analysis (Python, SQL) using Viz tools like Tableau, Power BI & others.

Project Description : Ingest data from multiple data source into Data pipeline through Snowflake connectors to a raw layer. Data will be stored in the Datawarehouse after apply the business rules and transformations using the ETL tools like Snowpark, Talend, Informatica IICS & others. After Data Modeling, Data Marts will be prepared in Snowflake derived zone to bring data in the reporting layer that integrates with BI tools (Tableau & Power BI).

Automated Ingestion Framework Pipeline build Analytical AI Models using Snowflake Data Pipeline & reported to Excel / Tableau / Power BI Dashboard)

Snowflake pipeline will be automated through Snowpipe that loads data within minutes after files are added to a stage and submitted for ingestion. Snowpipe load files in micro-batches rather than manually executing COPY statements on a schedule to load larger batches. Data will be stored in the Datawarehouse after apply the business rules and transformations using the Snowpark library, Talend, Informatica IICS & others.

Hours of content

Live Sessions

Software Tools

After completion of this training program you will be able to launch your carrer in the world of Snowflake & Machine Learning being certified as Snowflake Certified Data Scientist Professional.

With the ML Certification in-hand you can boost your profile on Linked, Meta, Twitter & other platform to boost your visibility

- Get your certificate upon successful completion of the course.

- Certificates for each course

- Snowflake Model Registry

- Snowflake Core

- Snowflake AI

- Snowflake ML

- Snowpark

- Snow SQL

- Snowflake Cloud

- Snowflake Virtual Warehouse

- Snowpipe

- Snowpark

- Snowflake Continuous Ingestion

- Snowflake API's

- Snowflake Pipeline

- Snowflake Kafka

45% - 60%

Designed to provide guidance on current interview practices, personality development, soft skills enhancement, and HR-related questions

Receive expert assistance from our placement team to craft your resume and optimize your Job Profile. Learn effective strategies to capture the attention of HR professionals and maximize your chances of getting shortlisted.

Engage in mock interview sessions led by our industry experts to receive continuous, detailed feedback along with a customized improvement plan. Our dedicated support will help refine your skills until your desired job in the industry.

Join interactive sessions with industry professionals to understand the key skills companies seek. Practice solving interview question worksheets designed to improve your readiness and boost your chances of success in interviews

Build meaningful relationships with key decision-makers and open doors to exciting job prospects in Product and Service based partner

Your path to job placement starts immediately after you finish the course with guaranteed interview calls

Why should you choose to pursue a Snowflake Certified Data Scientist course with Success Aimers?

Success Aimers teaching strategy follow a methodology where in we believe in realtime job scenarios that covers industry use-cases & this will help in building the carrer in the field of Machine Learning & also delivers training with help of leading industry experts that helps students to confidently answers questions confidently & excel projects as well while working in a real-world

What is the time frame to become competent as a Snowflake Data Scientist?

To become a successful ML Engineer required 1-2 years of consistent learning with dedicated 3-4 hours on daily basis.

But with Success Aimers with the help of leading industry experts & specialized trainers you able to achieve that degree of mastery in 6 months or one year or so and it’s because our curriculum & labs we had formed with hands-on projects.

Will skipping a session prevent me from completing the course?

Missing a live session doesn’t impact your training because we have the live recorded session that’s students can refer later.

What industries lead in Snowflake implementation?

- Manufacturing

- Financial Services

- Healthcare

- E-commerce

- Telecommunications

- BFSI (Banking, Finance & Insurance)

- Travel Industry

Does Success Aimers offer corporate training solutions?

At Success Aimers, we have tied up with 500 + Corporate Partners to support their talent development through online training. Our corporate training programme delivers training based on industry use-cases & focused on ever-evolving tech space.

How is the Success Aimers Snowflake Data Scientist Certification Course reviewed by learners?

Our ML Engineer Course features a well-designed curriculum frameworks focused on delivering training based on industry needs & aligned on ever-changing evolving needs of today’s workforce due to ML.

Also our training curriculum has been reviewed by alumi & praises the thoroguh content & real along practical use-cases that we covered during the training. Our program helps working professionals to upgrade their skills & help them grow further in their roles…

Can I attend a demo session before I enroll?

Yes, we offer one-to-one discussion before the training and also schedule one demo session to have a gist of trainer teaching style & also the students have questions around training programme placements & job growth after training completion.

What batch size do you consider for the course?

On an average we keep 5-10 students in a batch to have a interactive session & this way trainer can focus on each individual instead of having a large group

Do you offer learning content as part of the program?

Students are provided with training content wherein the trainer share the Code Snippets, PPT Materials along with recordings of all the batches

Snowflake Certification Training in Gurgaon Master Snowflake cloud and get prepared for Snowflake official certifications...

Snowflake Data Administrator Certification Training in Gurgaon Master Snowflake cloud and get prepared for Snowflake...

Snowflake Data Analyst Certification Training in Gurgaon Master Snowflake Cloud and get prepared for Snowflake...

Snowflake Data Architect Certification Training in Gurgaon Master Snowflake Cloud and get prepared for Snowflake...

Snowflake Data Engineer Certification Training in Gurgaon Master Snowflake cloud and get prepared for Snowflake...

Snowflake Snowpro Core Certification Training in Gurgaon Master Snowflake Cloud and get prepared for Snowflake...